Is it possible to use wget for copying files in my own system?

I want to move/copy large size of files in my own system, and I want to use wget command with its -c switch to having a resumable transferring. The copy(cp) or move(mv) commands doesn't provide this option for me.

Is it possible to use wget to copy these files from one directory to another directory in my own system?

8 Answers

Yes, but you need a web server set up to serve the files you need to copy.

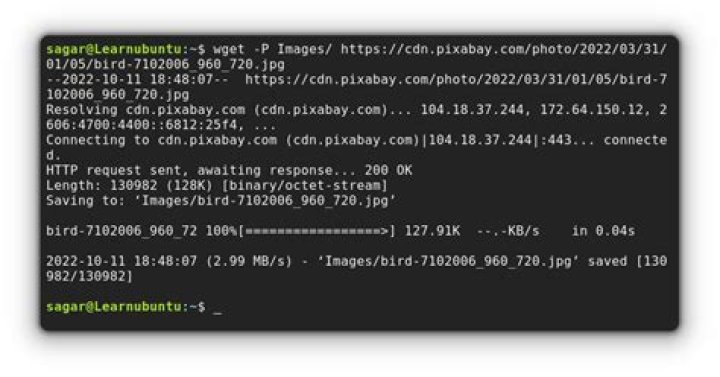

try something like this. On a terminal, start a simple web server:

cd /etc/

python -m SimpleHTTPServerthen open another terminal and do:

wget the passwd file will be downloaded to your current directory. You're in effect copying it from /etc/ to your home directory.

I'm not sure why you want to do this but yes, in principle, as you can see, it is possible.

5It would probably make more sense to use rsync instead. For example:

rsync -aP /source ~/destinationThe -P or --partial flag prevents incomplete files from being deleted if there is an interruption. If you run the same command again, any incomplete transfer will be resumed.

This way, no need to bother with a local web server, which you would need for wget.

While I strongly recommend rsync, you can use curl without running an HTTP server:

curl -O -C - file:///path/to/some/filecurl is different from wget, but is just as, if not more, powerful. It's always handy to have on any system.

From man curl:

-C, --continue-at <offset> Continue/Resume a previous file transfer at the given offset. Use "-C -" to tell curl to automatically find out where/how to resume the transfer -O, --remote-name Write output to a local file named like the remote file we get.lftp can handle local file systems without need for a server.

lftp- Sophisticated file transfer programlftp can handle several file access methods - ftp, ftps, http, https, hftp, fish, sftp and file (https and ftps are only available when lftp is compiled with GNU TLS or OpenSSL library).

Besides FTP-like protocols, lftp has support for BitTorrent protocol as `torrent' command. Seeding is also supported.

Reference: man lftp

To copy a sinlge file: (use

mgetfor multiple files or with*joker)lftp -c "get -c file:///<absolute-path-to-file>"To copy a folder:

lftp -c "mirror -c file:///<absolute-path-to-directory>"

First -c for command, second -c for continue whenever possible.

For space, It does work for me either \ like shell or %20 like web URL.

it's possible. You need to have a web server like apache or nginx. if it's apache, to copy file you can do this

wget because de home directory to the apache server is /var/www

Another way using, using dd:

- Check destination file size using

statorls -lcommand Copy using:

dd if=<source-file-path> iflag=skip_bytes skip=<dest-file-size> oflag=seek_bytes seek=<dest-file-size> of=<dest-file-path>

Example:

$ ls -l /home/user/u1404_64_d.iso

-rw-rw-r-- 1 user user 147186688 Jan 8 17:01 /home/user/u1404_64_d.iso

$ dd if=/boot/grml/u1404_64_d.iso \ iflag=skip_bytes skip=147186688 oflag=seek_bytes seek=147186688 \ of=/home/user/u1404_64_d.iso

1686798+0 records in

1686798+0 records out

863640576 bytes (864 MB) copied, 15.1992 s, 56.8 MB/s

$ md5sum /boot/grml/u1404_64_d.iso /home/user/u1404_64_d.iso

dccff28314d9ae4ed262cfc6f35e5153 /boot/grml/u1404_64_d.iso

dccff28314d9ae4ed262cfc6f35e5153 /home/user/u1404_64_d.isoIt could be harmful as it can overwrite file without check, here a better function to check for hash before continue:

ddc () { # enable hash check, need much time to read both files hashcheck=true # check destination folder existance or assume it's a file name if [ -d "$2" ] then ofpath="$2/`basename \"$1\"`" else ofpath="$2" fi # check destination file existance if [ ! -f "$ofpath" ] then a="n" else ofsize=`stat -c "%s" "$ofpath"` # calculate hash if [ $hashcheck ] then ifhash=`dd if="$1" iflag=count_bytes count=$ofsize 2>/dev/null | md5sum | awk '{print $1}'` #ifhash=`head -c $ofsize "$1" | md5sum | awk '{print $1}'` ofhash=`md5sum "$ofpath" | awk '{print $1}'` # check hash before cont. if [ $ifhash == $ofhash ] then a="y" else echo -e "Files MD5 mismatch do you want to continue:\n(Y) Continue copy, (N) Start over, (Other) Cancel" read a fi else a="y" fi fi case $a in [yY]) echo -e "Continue...\ncopy $1 to $ofpath" dd if="$1" iflag=skip_bytes skip=$ofsize oflag=seek_bytes seek=$ofsize of="$ofpath" ;; [nN]) echo -e "Start over...\ncopy $1 to $ofpath" dd if="$1" of="$ofpath" ;; *) echo "Cancelled!" ;; esac

}Use:

ddc <source-file> <destination-file-or-folder>Example:

$ ls -l /home/user/u1404_64_d.iso

-rw-rw-r-- 1 user user 241370112 Jan 8 17:09 /home/user/u1404_64_d.iso

$ ddc /boot/grml/u1404_64_d.iso /home/user/u1404_64_d2.iso

Continue...copy /boot/grml/u1404_64_d.iso to /home/user/u1404_64_d.iso

1502846+0 records in

1502846+0 records out

769457152 bytes (769 MB) copied, 13.0472 s, 59.0 MB/sStill another way:

Get remaining size to copy

echo `stat -c "%s" <source-file>`-`stat -c "%s" <destination-file>` | bcRedirect output of

tailtail -c <remaining-size> <source-file> >> <destination-file>

Example:

$ echo `stat -c "%s" /boot/grml/u1404_64_d.iso`-`stat -c "%s" /home/user/u1404_64_d.iso` | bc

433049600

$ tail -c 433049600 /boot/grml/u1404_64_d.iso >> /home/user/u1404_64_d.iso

$ md5sum /boot/grml/u1404_64_d.iso /home/user/u1404_64_d.iso

dccff28314d9ae4ed262cfc6f35e5153 /boot/grml/u1404_64_d.iso

dccff28314d9ae4ed262cfc6f35e5153 /home/user/u1404_64_d.isoSince time seems to be an issue for you - another approach is to use the command split to break a large file into smaller pieces, e.g.,split -b 1024m BIGFILE PIECESto create 1 GByte pieces named PIECESaa PIECESab ... PIECESzz

Once these are created you would use something likecat PIECES?? >/some/where/else/BIGFILE to reconstruct BIGFILE, or again, because of your time concerns:

mkdir done

>/somewhere/else/BIGFILE

for piece in PIECES??

do cat $piece >>/some/where/else/BIGFILE status=$? if [[ $status -eq 0 ]] then mv $piece done fi

doneIf the 'copy' fails you can move the files from ./done back to . and try again once the time issue is resolved. - See this as a alternative when wget is not possible. It is something I have used over the years when copying to a remote location and something like 'plain ftp' is the only transport possible - for the pieces.