Show that the determinant of $A$ is equal to the product of its eigenvalues

Show that the determinant of a matrix $A$ is equal to the product of its eigenvalues $\lambda_i$.

So I'm having a tough time figuring this one out. I know that I have to work with the characteristic polynomial of the matrix $\det(A-\lambda I)$. But, when considering an $n \times n$ matrix, I do not know how to work out the proof. Should I just use the determinant formula for any $n \times n$ matrix? I'm guessing not, because that is quite complicated. Any insights would be great.

$\endgroup$ 68 Answers

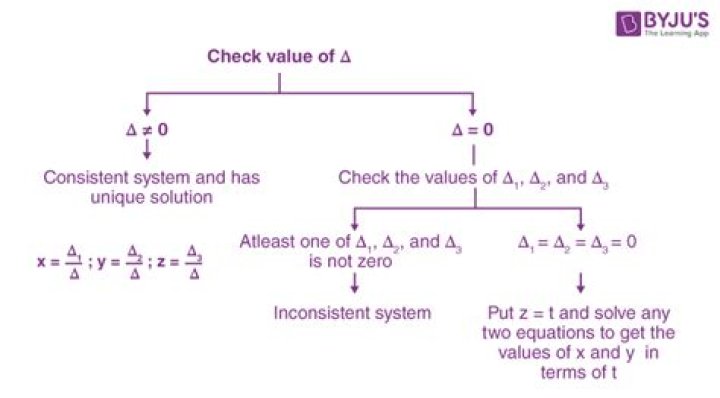

$\begingroup$Suppose that $\lambda_1, \ldots, \lambda_n$ are the eigenvalues of $A$. Then the $\lambda$s are also the roots of the characteristic polynomial, i.e.

$$\begin{array}{rcl} \det (A-\lambda I)=p(\lambda)&=&(-1)^n (\lambda - \lambda_1 )(\lambda - \lambda_2)\cdots (\lambda - \lambda_n) \\ &=&(-1) (\lambda - \lambda_1 )(-1)(\lambda - \lambda_2)\cdots (-1)(\lambda - \lambda_n) \\ &=&(\lambda_1 - \lambda )(\lambda_2 - \lambda)\cdots (\lambda_n - \lambda) \end{array}$$

The first equality follows from the factorization of a polynomial given its roots; the leading (highest degree) coefficient $(-1)^n$ can be obtained by expanding the determinant along the diagonal.

Now, by setting $\lambda$ to zero (simply because it is a variable) we get on the left side $\det(A)$, and on the right side $\lambda_1 \lambda_2\cdots\lambda_n$, that is, we indeed obtain the desired result

$$ \det(A) = \lambda_1 \lambda_2\cdots\lambda_n$$

So the determinant of the matrix is equal to the product of its eigenvalues.

$\endgroup$ 11 $\begingroup$I am a beginning Linear Algebra learner and this is just my humble opinion.

One idea presented above is that

Suppose that $\lambda_1,\ldots \lambda_2$ are eigenvalues of $A$.

Then the $\lambda$s are also the roots of the characteristic polynomial, i.e.

$$\det(A−\lambda I)=(\lambda_1-\lambda)(\lambda_2−\lambda)\cdots(\lambda_n−\lambda)$$.

Now, by setting $\lambda$ to zero (simply because it is a variable) we get on the left side $\det(A)$, and on the right side $\lambda_1\lambda_2\ldots \lambda_n$, that is, we indeed obtain the desired result

$$\det(A)=\lambda_1\lambda_2\ldots \lambda_n$$.

I dont think that this works generally but only for the case when $\det(A) = 0$.

Because, when we write down the characteristic equation, we use the relation $\det(A - \lambda I) = 0$ Following the same logic, the only case where $\det(A - \lambda I) = \det(A) = 0$ is that $\lambda = 0$. The relationship $\det(A - \lambda I) = 0$ must be obeyed even for the special case $\lambda = 0$, which implies, $\det(A) = 0$

UPDATED POST

Here i propose a way to prove the theorem for a 2 by 2 case. Let $A$ be a 2 by 2 matrix.

$$ A = \begin{pmatrix} a_{11} & a_{12}\\ a_{21} & a_{22}\\\end{pmatrix}$$

The idea is to use a certain property of determinants,

$$ \begin{vmatrix} a_{11} + b_{11} & a_{12} \\ a_{21} + b_{21} & a_{22}\\\end{vmatrix} = \begin{vmatrix} a_{11} & a_{12}\\ a_{21} & a_{22}\\\end{vmatrix} + \begin{vmatrix} b_{11} & a_{12}\\b_{21} & a_{22}\\\end{vmatrix}$$

Let $ \lambda_1$ and $\lambda_2$ be the 2 eigenvalues of the matrix $A$. (The eigenvalues can be distinct, or repeated, real or complex it doesn't matter.)

The two eigenvalues $\lambda_1$ and $\lambda_2$ must satisfy the following condition :

$$\det (A -I\lambda) = 0 $$ Where $\lambda$ is the eigenvalue of $A$.

Therefore, $$\begin{vmatrix} a_{11} - \lambda & a_{12} \\ a_{21} & a_{22} - \lambda\\\end{vmatrix} = 0 $$

Therefore, using the property of determinants provided above, I will try to decompose the determinant into parts.

$$\begin{vmatrix} a_{11} - \lambda & a_{12} \\ a_{21} & a_{22} - \lambda\\\end{vmatrix} = \begin{vmatrix} a_{11} & a_{12} \\ a_{21} & a_{22} - \lambda\\\end{vmatrix} - \begin{vmatrix} \lambda & 0 \\ a_{21} & a_{22} - \lambda\\\end{vmatrix}= \begin{vmatrix} a_{11} & a_{12} \\ a_{21} & a_{22}\\\end{vmatrix} - \begin{vmatrix} a_{11} & a_{12} \\ 0 & \lambda \\\end{vmatrix}-\begin{vmatrix} \lambda & 0 \\ a_{21} & a_{22} - \lambda\\\end{vmatrix}$$

The final determinant can be further reduced.

$$ \begin{vmatrix} \lambda & 0 \\ a_{21} & a_{22} - \lambda\\\end{vmatrix} = \begin{vmatrix} \lambda & 0 \\ a_{21} & a_{22} \\\end{vmatrix} - \begin{vmatrix} \lambda & 0\\ 0 & \lambda\\\end{vmatrix} $$

Substituting the final determinant, we will have

$$ \begin{vmatrix} a_{11} - \lambda & a_{12} \\ a_{21} & a_{22} - \lambda\\\end{vmatrix} = \begin{vmatrix} a_{11} & a_{12} \\ a_{21} & a_{22}\\\end{vmatrix} - \begin{vmatrix} a_{11} & a_{12} \\ 0 & \lambda \\\end{vmatrix} - \begin{vmatrix} \lambda & 0 \\ a_{21} & a_{22} \\\end{vmatrix} + \begin{vmatrix} \lambda & 0\\ 0 & \lambda\\\end{vmatrix} = 0 $$

In a polynomial $$ a_{n}\lambda^n + a_{n-1}\lambda^{n-1} ........a_{1}\lambda + a_{0}\lambda^0 = 0$$ We have the product of root being the coefficient of the term with the 0th power, $a_{0}$.

From the decomposed determinant, the only term which doesn't involve $\lambda$ would be the first term

$$ \begin{vmatrix} a_{11} & a_{12} \\ a_{21} & a_{22} \\\end{vmatrix} = \det (A) $$

Therefore, the product of roots aka product of eigenvalues of $A$ is equivalent to the determinant of $A$.

I am having difficulties to generalize this idea of proof to the $n$ by $$ case though, as it is complex and time consuming for me.

$\endgroup$ 3 $\begingroup$From eigen decomposition

$A = S \lambda S^{-1}$, where $\lambda$ is a matrix formed by eigen values of A.

$\implies det(A) = det(S)\phantom{1}det(\lambda)\phantom{1}det(S^{-1})$

$\implies det(A) = det(\lambda) $

$ det(\lambda)$ is nothing but $\lambda_1$$\lambda_2$....$\lambda_n$

$\endgroup$ 4 $\begingroup$The approach I would use is to Decompose the matrix into 3 matrices based on the eigenvalues.

Then you know that the $det(A*B) = det(A)*det(B)$, and that $det(inv(A)) = \dfrac{1}{det(A)}$.

You can probably fill in the rest of the details from the article, depending on how rigorous your proof needs to be.

Edit: I just realized this won't work on all matrices, but it might give you an idea of an approach.

$\endgroup$ 4 $\begingroup$You must know the following:

== If we take an extension of the basis field then both the determinant and the trace of a (square) matrix remain unchanged when evaluating them in the new field

== Take a splitting field of the characteristic polynomial of $\;A\;$ and calculate this matrix's Jordan Canonical form. Since this last is a triangular matrix its determinant is the product of the elements in its main diagonal, and we know that in this diagonal appear the eigenvalues of $\;A\;$ so we're done.

$\endgroup$ $\begingroup$Instead of assuming that the matrix is diagonalisable, as done in some of the previous answers, we can use the Jordan form. That is, every matrix has an associated Jordan form through a similarity transformation:$$J=M^{-1}AM$$for a certain invertible $M$. Since $J$ is triangular, its determinant is simply the product of its diagonal entries, which also happen to be the eigenvalues of $A$. That is,$$\det(J)=\prod_i{\lambda_i(A)}$$Where $\lambda_i(A)$ denotes the $i$th eigenvalue of $A$.

Computing the determinants, we have$$\det(J)=\det(M^{-1})\det(A)\det(M)$$The determinants of $M$ and $M^{-1}$ cancel to give $1$, and so$$\det(J)=\det(A)$$Combining our second and fourth equations we have the result:$$\det(A)=\prod_i{\lambda_i(A)}=\lambda_1\lambda_2...\lambda_n$$.

TL;DR:

$\det(A)=\det(M^{-1})\det(J)\det(M)$

$\implies \det(A) = \det(J)$.

And since $J$ is triangular and has eigenvalues along its diagonal, $\det(A)=\det(J)=\lambda_1\lambda_2...\lambda_n$.

$\endgroup$ $\begingroup$A few places in this thread I noticed people raised issues about 'what if A doesn't have independent columns' or 'what if the determinant is 0'. I believe the following are all equivalent:

- 0 is an eigenvalue of A

- A has linearly dependent columns (or rows)

- $det(A)=0$

- $\prod_i \lambda _i = 0$ (the product of eigenvalues of A)

so we can take care of this issue by saying "Suppose one of the above is true. Then $det(A)=\prod_i \lambda _i = 0$, otherwise... (note it is clear that 0 being an eigenvalue results in the product being 0)

$\endgroup$ $\begingroup$I think this is right...

Write A =$$\begin{pmatrix}a_{11} & a_{12}&\cdots&a_{1n}\\\ \vdots & \vdots&&\vdots\\\ a_{n1}& a_{n2}&\cdots& a_{nn} \end{pmatrix}$$

Let the n eigenvalues of A be $\lambda_1, \cdots , \lambda_n$. Finally, denote the characteristic polynomial of A by$p(\lambda) = |\lambda I − A| = \lambda_n + c_{n−1}\lambda{n−1} + \cdots + c_1λ + c_0$.

Note that since the eigenvalues of A are the zeros of $p(\lambda)$, this implies that $p(\lambda)$ can be factorised as $p(\lambda) = (\lambda − \lambda_1)\cdots(\lambda − \lambda_n)$. Consider the constant term of $p(λ), c_0$. The constant term of $p(\lambda)$ is given by $p(0)$, which can be calculated in two ways:

Firstly, $p(0) = (0 − λ_1)\cdots(0 − λ_n) = (−1)^nλ_1 \cdots λ_n$.

Secondly, $p(0) = |0I − A| = | − A| = (−1)^n |A|$.

Therefore $c_0 = (−1)^nλ_1 \cdots λ_n = (−1)^n |A|$, and so $λ_1 \cdots λ_n = |A|$.

That is, the product of the n eigenvalues of A is the determinant of A.

$\endgroup$ 3